Revolutionizing AI Networking: 8 Key Insights into the NVIDIA Spectrum-X and MRC Breakthrough

The race to build the world’s most powerful AI factories demands networking that keeps pace with the ambitions of AI itself. NVIDIA Spectrum-X Ethernet scale-out infrastructure stands at the forefront of that race as the most advanced AI networking technology available today, deployed by industry leaders who can’t afford to compromise on performance, resilience, or scale. That includes OpenAI, Microsoft, and Oracle. Now, with the introduction of Multipath Reliable Connection (MRC), this open, AI-native Ethernet fabric sets a new standard for gigascale AI. Here are eight key insights into this groundbreaking technology.

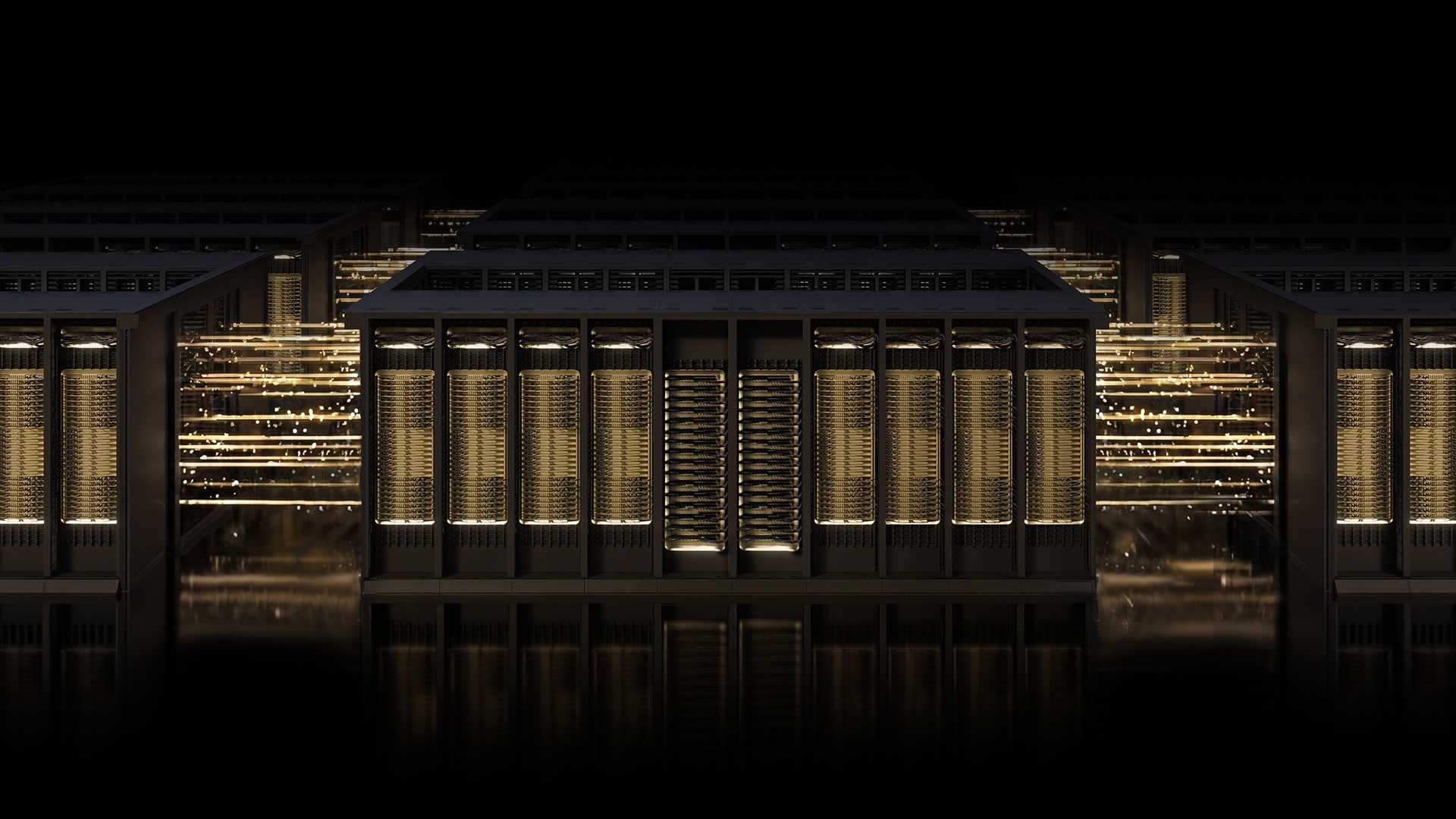

1. What Is the NVIDIA Spectrum-X Ethernet Fabric?

NVIDIA Spectrum-X is an open, AI-native Ethernet fabric designed specifically for the demands of modern AI workloads. Unlike traditional networking solutions, it is purpose-built to handle the massive data flows and low-latency requirements of training and deploying large-scale AI models. By integrating advanced hardware, deep telemetry, and intelligent fabric control, Spectrum-X provides a robust foundation that ensures high performance, resilience, and scalability. This platform is already powering some of the largest AI factories in the world, including those operated by OpenAI, Microsoft, and Oracle, demonstrating its ability to support even the most ambitious AI ambitions without compromise.

2. Understanding Multipath Reliable Connection (MRC)

Multipath Reliable Connection (MRC) is an RDMA transport protocol that revolutionizes how data moves across AI training fabrics. Instead of relying on a single network path, MRC enables a single RDMA connection to distribute traffic across multiple paths simultaneously. Think of it as replacing a single-lane road with a cleverly laid-out street grid system paired with a real-time traffic app. This allows data to dynamically reroute around slowdowns and road closures, improving throughput, load balancing, and availability. MRC transforms AI networking from a bottleneck into a seamless, high-speed conduit for gigascale operations.

3. Industry Leaders Rally Behind MRC

The adoption of MRC by major AI players underscores its transformative potential. OpenAI, Microsoft, and Oracle have all integrated MRC into their AI infrastructure. According to Sachin Katti, head of industrial compute at OpenAI, “Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA. MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.” This real-world validation proves MRC’s ability to deliver on the promise of gigascale AI networking.

4. A Collaborative Effort: NVIDIA, Microsoft, and OpenAI

The development of MRC is the result of a longstanding collaboration between NVIDIA, Microsoft, and OpenAI. Microsoft’s Fairwater and Oracle Cloud Infrastructure’s Abilene data center are two of the largest AI factories purpose-built for training and deploying frontier LLMs. Both rely on MRC to meet their performance, scale, and efficiency requirements. This partnership shows how industry leaders are working together to push the boundaries of AI infrastructure. The Spectrum-X Ethernet platform, optimized for MRC, provides the network foundation needed to run large-scale AI models with confidence and reliability.

5. Open Specification Through the Open Compute Project

After proven success in production on NVIDIA Spectrum-X Ethernet hardware, MRC has been released as an open specification through the Open Compute Project (OCP). This move democratizes access to advanced AI networking, allowing a broader ecosystem of developers and organizations to adopt and innovate on the protocol. By open-sourcing MRC, NVIDIA ensures that the benefits of multipath RDMA—enhanced throughput, load balancing, and resilience—are available to all, accelerating the development of gigascale AI across the industry.

6. Boosting GPU Utilization Through Intelligent Load Balancing

One of MRC’s key performance benefits is its ability to dramatically improve GPU utilization. By load-balancing traffic across all available paths, MRC ensures that every GPU receives the bandwidth it needs throughout a training run. Even under heavy congestion, the protocol dynamically avoids overloaded paths in real time, sustaining high bandwidth and preventing slowdowns. This results in higher overall training efficiency, as GPUs spend more time computing and less time waiting for data. For gigascale AI, this translates into faster model training and reduced time-to-insight.

7. Resilience and Fault Tolerance at Scale

When data loss occurs in traditional networks, it can cause significant delays and GPU idle time. MRC addresses this with intelligent retransmission, enabling rapid, precise recovery from short-lived interruptions. By minimizing the impact of packet loss, the protocol helps maintain the continuity of long-running training jobs. This resilience is critical for AI factories, where even a few seconds of downtime can cascade into hours of lost productivity. MRC ensures that the network remains robust and reliable, even in the face of inevitable failures, keeping AI workloads on track.

8. Enhanced Operational Visibility and Control

Beyond performance, MRC provides administrators with fine-grained visibility and control over traffic paths. Deep telemetry from the Spectrum-X hardware allows operators to monitor network conditions in real time, identify bottlenecks, and troubleshoot issues quickly. This simplified operations management accelerates troubleshooting and reduces the complexity of maintaining large-scale AI networks. With MRC, AI infrastructure teams gain the tools they need to optimize performance, ensure reliability, and respond proactively to changing demands, making gigascale AI more manageable than ever.

As AI continues to push the boundaries of what’s possible, networking technology must evolve to keep pace. NVIDIA Spectrum-X with MRC represents a major leap forward, offering an open, AI-native Ethernet fabric that delivers unmatched performance, resilience, and scalability. By enabling multipath communication, load balancing, and intelligent retransmission, MRC sets the standard for gigascale AI infrastructure. With industry leaders already reaping the benefits, this technology is poised to power the next generation of AI factories worldwide.